Exercise-3: Direct vs step down method of cost allocation

Posted in: Service department costing (exercises)

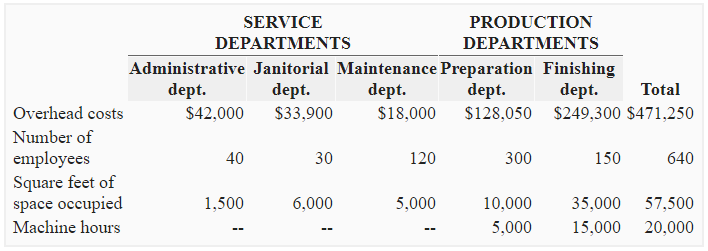

Prozma Company has three service departments and two operating departments. The following is the selected data of five departments:

Required:

- Allocate the costs of service departments to operating departments assuming the company uses direct method. The allocation basis to be used for distributing service departments’ costs to operating departments are: number of employees for administrative department, square feet of space occupied for janitorial department and machine hours for maintenance department.

- Allocate the cost of service departments to operating departments assuming the company uses step down method and allocates the costs in the following order: (1). Administrative department (number of employees), (2). Janitorial department (square feet of space occupied), (3). Maintenance department (machine hours).

Solution

1. If the company uses direct method of cost allocation:

Distribution rates:

Administrative department:

$42,000/450 employees* = 93.33333

*300 + 150 = 450 employees

Janitorial department:

$33,900/45,000 sq. ft.* = 0.75333

*10,000 + 30,000 = 45,000 employees

Maintenance department:

$18,000/20,000 machine hours* = 0.9

*5,000 + 15,000 = 20,000 machine hours

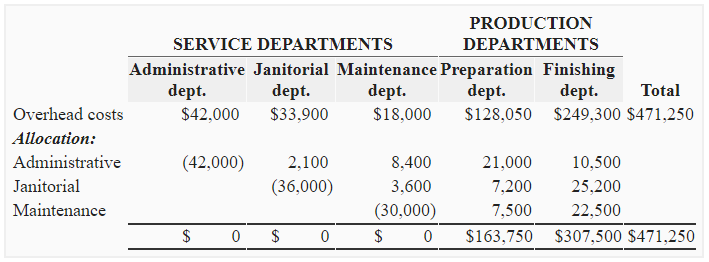

2. If the company uses step down method of cost allocation

Distribution rates:

Administrative department:

$42,000/600 employees* = $70 per employee

*30 + 120 + 300 + 150 = 600 employees

Janitorial department:

$36,000/50,000 sq.ft.* = $0.72 per sq. ft.

*5,000 + 10,000 + 35,000 = 50,000 sq. ft.

Maintenance department:

$30,000/20,000 machine hours* = $1.2 per hour

*5,000 + 15,000 = 20,000 hours

Leave a comment